Open Source Traffic Analyzer

Introduction

Proper traffic analysis is crucial for the development of network systems, services and protocols. Traffic analysis equipment is often based on costly dedicated hardware, and uses proprietary software for traffic generation and analysis. The recent advances in open source packet processing, with the potential of generating and receiving packets using a regular Linux computer at 10 Gb/s speed, opens up very interesting possibilities in terms of implementing a traffic analysis system based on open-source Linux.

The pktgen software package for Linux is a popular tool in the networking community for generating traffic loads for network experiments. Pktgen is a high-speed packet generator, running in the Linux kernel very close to the hardware, thereby making it possible to generate packets with very little processing overhead. The packet generation can be controlled through a user interface with respect to packet size, IP and MAC addresses, port numbers, inter-packet delay, and so on.

In this work, improvements to pktgen are proposed, designed, implemented and evaluated, with the goal of evolving pktgen into a complete and efficient network analysis tool. The rate control is significantly improved, increasing the resolution and improving the usability by making it possible to specify exactly the sending rate. A receive-side tool is designed and implemented with support for measurement of number of packets, throughput, inter-arrival time, jitter and latency. The design of the receiver takes advantage of SMP systems and new features on modern network cards, in particular support for multiple receive queues and CPU scheduling. This makes it possible to use multiple CPUs to parallelize the work, improving the overall capacity of the traffic analyser.

A significant part of the work has been spent on investigating low-level details of Linux networking. From this work we draw some general conclusions related to high speed packet processing in SMP systems. In particular, we study how the packet processing capacity per CPU depends on the number of CPUs.

Documentation

The work was developed in a scope of a Master thesis. Here you can find the final report and the presentation. They were presented in June 2010 in Stockholm.

Pktgen receiver is controlled by the proc file system. A new file is created: /proc/net/pktgen/pgrx. The commands for controlling are:

- rx [device] to enable the receiver part for a specific device. If it is wrong, all the devices are used. (all versions)

- rx_reset: to reset the counters

- rx_disable: to disable the receiver

- display [human or script]

- statistics [counter, basic, basic6 or time]

In the transmission the config parameter is added in the config version

- config [0 or 1] Enables or disables the configuration packet, which reset the statistics and allows to calculate the losses.

Source

This file includes a Makefile and the source code. In this case is not necessary to recompile the kernel. Decompress the file and compile it with make.

In order to insert the module:

insmod ./pktgen.ko

RECOMENDED!!. Download for Linux 4.6 (22/06/2016) (include all patches except configuration packet)

Download for Linux 3.19 (23/11/2015) (include all patches except configuration packet. Uses netfilter instead of packet handler and can use burst). Ported by Adam Drescher.

Download for Linux 3.18 (08/04/2015) (include all patches except configuration packet. Uses netfilter instead of packet handler and can use burst)

Download for Linux 3.11 (12/02/2014) (include all patches except configuration packet. Uses netfilter instead of packet handler)

Download for net-next v3.6-rc2 (26/09/2012) (include all patches except configuration packet.)

Download for net-next v2.6.38-rc8 (14/03/2012) (include all patches except configuration packet.)

Download for net-next v2.6.37 (01/12/2010) (include basic ipv6 support)

Download for net-next v2.6.36 (12/10/2010)

Older kernel versions may not work because changes on the symbols of the kernel

Bifrost distribution includes the receiver patches since version 7.

The source code also can be found in github: https://github.com/danieltt/net-next

Patches

Bifrost distribution also includes the receiver patches since version 7.

Here there is a set of patches for different versions of the Linux Kernel. The patches are dependent of the previous ones, so you have to apply them in order.

The first step is downloading the required packages to build the kernel. In Ubuntu, using pktgen the following command is used:

apt-get install build-essential ncurses-dev git-core kernel-package

Clone the git repository of net-next. It will take some time.

git clone git://git.kernel.org/pub/scm/linux/kernel/git/davem/net-next-2.6.git

Apply the pktgen patch

patch -p1 < [path]/pktgen.patch

Configure the kernel with and compile the kernel.

make menuconfig

make

make modules_install

make install

Usually is a good idea to create an initramfs in order to load the required modules. In order to do that type:

update-initramfs -c -k [kernel_version]

After the compilation, reboot the system. Finally in order to test the module

modprobe pktgen

Patch transmission

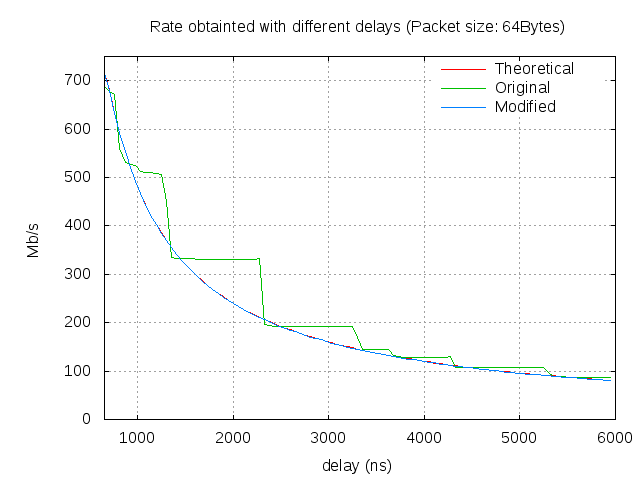

This patch improves the granularity of the delay in the pktgen. It is changed to use nanosecond resolution in the spin function of pktgen. Also two parameters are added to set directly the packets per second or the Mbps to send.

In the configuration of the device /proc/net/pktgen/eth*:

- rate [rate in Mbps]

- ratep [rate in pps]

This patch is already applied in kernel's version 2.6.35-rc3 (9th June 2010) and above.

Download for net-next v2.6.34 (25/08/2010)

Patch receiver basic

This patch add a receiver part for the pktgen module. It is added as a protocol handler in order to avoid modifications in other parts of the kernel. It count the packets and the bytes received, as well as the throughput. In a SMP system it uses all the CPUs with per-CPU variables.

Download for net-next v2.6.37 (25/08/2010)

Download for net-next v2.6.35 (25/08/2010)

Download for net-next v2.6.34

Patch receiver with time statistics

This patch adds the following characteristics to the previous patch:

- Inter-arrival packet time measurement

- Jitter measurement

- Latency measurement (TX and RX in the same machine)

- Only counters measurement

- Different types of displaying data

Download for net-next v2.6.37 (01/12/2010) . (Requires patch receiver basic).

Download for net-next v2.6.35 (25/08/2010) . (Requires patch receiver basic).

Download for net-next v2.6.34 (Requires patch receiver basic).

Patch receiver basic with IPv6 (NEW)

This patch add ipv6 support for the basic statistics. To enable the reception in ipv6:

echo statistics basic6 > /proc/net/pktgen/pgrx

Download for net-next v3.6-rc2 (26/09/2012) . (It includes all the previous patches. RECOMENDED).

Download for net-next v2.6.38-rc8 (14/03/2011) . (It includes all the previous patches).

Patch receiver with configuration

This last patch include a configuration packet which is send in the beginning of the test in order to reset the statistics on the receiver side and set the packets and bytes to send.

Download for net-next v2.6.37 (01/12/2010) . (Requires patch receiver with time statistics).

Download for net-next v2.6.35 (25/08/2010) . (Requires patch receiver with time statistics).

Download for net-next v2.6.34 (Requires patch receiver with time statistics).

Examples

Here you can find some bash scripts as examples of configuration the transmission and reception.

More examples here.

- script-pktgen-rx: Script for enabling the receiver. 1rst argument is the interface and the 2nd the type of statistics

- script-pktgen-10g-tx-ratep-pkts: Script for starting the transmission in a multicore and multiqueue system. It cleans the previous configurations and it sends to specific rate (argument 1) using the number of CPUs of argument 2.

Test

Figure 1 shows the improvement of granularity obtained by the transmission patch. This improvement is already in the newer versions of the Kernel (2.6.35 and above). It was obtained changing the resolution of the timers involved in the selection of the rate.

Hardware

The motherboard is a TYAN S7002. The processors of the test machine are: Intel(R) Xeon(R) CPU E5520 at 2.27GHz. It is a Quad-Core processor with Hyperthreading, which in practice gives 8 available CPUs. The system has 3 Gigabyte (3x1Gigabyte) of DDR3 (1333MHz) RAM. The available network cards on the system are:

- Intel 82574L Gigabit Network (2 built in Copper Port): eth0, eth1

- 4 Intel 82576 Gigabit Network (2 x Dual Copper Port): eth2, eth3, eth4, eth5

- 2 Intel 82599EB 10-Gigabit Network (1 x Dual Fibre Port): eth6, eth7

There are two test machines available (host1 and host2).

Software

The operating system running on the test machines is Linux with Bifrost Distribution. The kernel was obtained from net-next repository in the 8th April of 2010. The version is 2.6.34-rc2. Bifrost distribution is a Linux distribution focus on networking. It is used in open source routers. Net-next repository is the kernel development repository

Some results

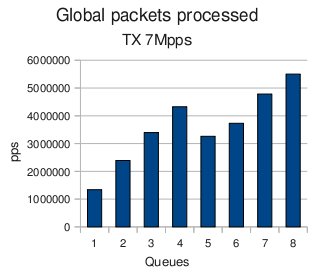

The receiver test was done changing the number of queues on the receiver. Each queue is attached to different processors. Each CPU has to have the same number of queues, otherwise the load is not balanced and their performance is reduced. The objective of this test is to check the amount of traffic that the system is able to process using different queues and CPUs. Also different flows are simulated because a single flow always goes to the same queue in order to avoid packet reordering.

The results of the test are plotted in Figure 2. It is shown that from 1 to 4, there is an increment of the maximal received throughput. With 5 CPUs there is a drop in the received capacity. With 6 CPUs the performance is also less the same than with four. With 7 and 8 the results are better, but the increment is not the same that in the first case. In order to understand the global behaviour, it is necessary to look at the details of each CPU.

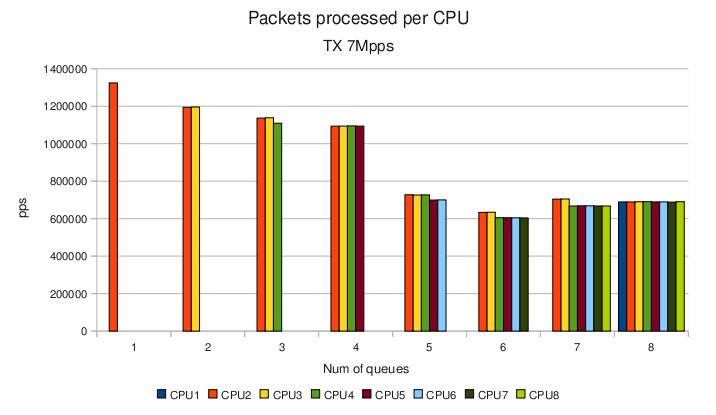

This is shown in Figure 3. It is visible, that each time that a queue is added, the individual performance drops. This is especially dramatic when 5 queues are used. In this case, the gain of the additional queue is less than the drop of performance. The drop of performance it is produced when at least one processor is using Hyperthreading. So, it is possible that the internal architecture of the processor have some influence on the results.

The results show that some resources are shared between the CPUs, and the time of synchronization and the use of common resources it is a key factor in the performance.

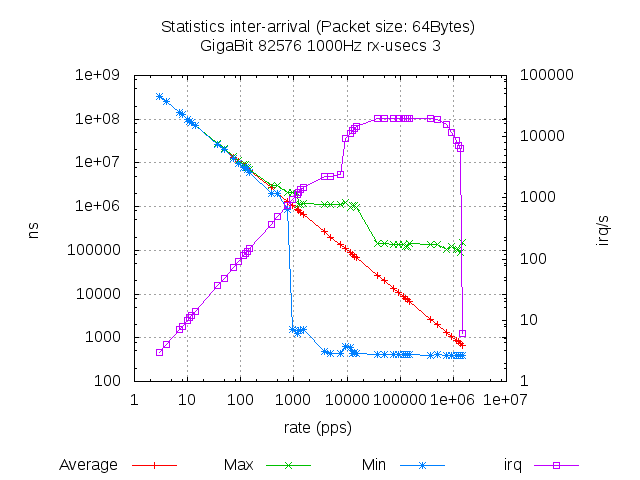

Figure 4 shows the results of the inter-arrival test with different rates. At low rate (less than 1kpps), in the inter-arrival time the maximal and the minimal are similar than the average. At this point, the minimal value drops to the minimal value and remains constant.

The rate of the drop depends on the transmitter, which sends the packets with small inter-departure time. In this case, polling starts to work. The average value is exactly the expected. Also it is observed that there are two steps in the maximal. The first one is due to the transmission side and it is caused by the timer frequency, which is responsible of the scheduling time. Further details can be found in the thesis report in Section 5.2.2

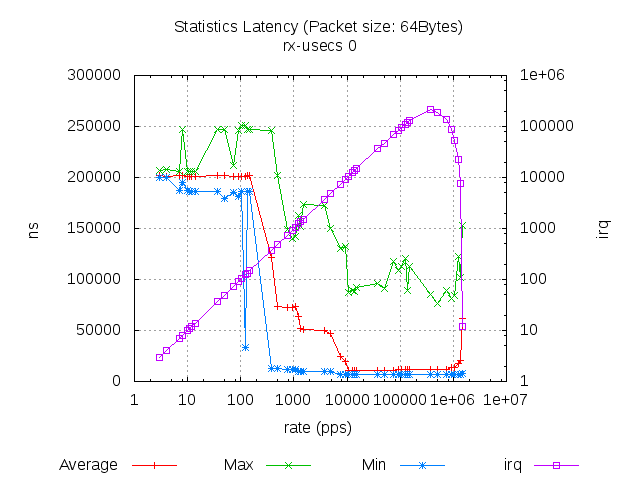

The latency measurement implemented in this project has the limitation that the transmitter and the receiver have to be in the same machine in order to avoid time synchronization problems. Figure 5 shows the latency obtained with the default configuration with the Gigabit card.

An unexpected behaviour is observed: at very low rate the latency is higher than in the rate in the middle of the capacity. This shape has to be investigated in future works. Nevertheless, the graph has the same shape with different packet size, with the only difference on the rate. The reason of this behaviour has to be founded on the driver module or in the hardware, because pktgen uses the public function offered by the driver API.

More results can be found in the Thesis report.

About

I'm Daniel Turull. I was a research engineer at Telecommunications System Laboratory in KTH in Stockholm where I did my master thesis about pktgen.

I'm a Telecommunications Engineer graduated by the Technical University of Catalonia (UPC) in Barcelona in 2010.

This work was done with collaboration with Robert Olsson and the support of the TSlab.

For further information or if you have some suggestions contact me at danieltt(at)kth.se.

Also you can find me at Linkedin.